Book Review: "The Master Algorithm" by Pedro Domingos

Fed by countless tributaries, the Amazon River is not defined by any single stream but by their convergence. In much the same way, the idea of a "master algorithm" imagines machine learning as a unifying process — where seemingly disparate methods such as linear regression, decision trees, support vector machines, and neural networks are not rivals, but parts of a larger whole. Does such a "Master Algorithm" truly exist, or is it merely an aspiration still beyond our reach?

Fed by countless tributaries, the Amazon River is not defined by any single stream but by their convergence. In much the same way, the idea of a "master algorithm" imagines machine learning as a unifying process — where seemingly disparate methods such as linear regression, decision trees, support vector machines, and neural networks are not rivals, but parts of a larger whole. Does such a "Master Algorithm" truly exist, or is it merely an aspiration still beyond our reach?

This book serves as an excellent complementary reading for anyone studying the technical foundations of machine learning. Rather than focusing on equations or implementation details, Pedro Domingos steps back and offers a conceptual map of the field — something that is often missing when one is too deeply immersed in coding models or tuning hyperparameters. Such is The Master Algorithm.

One of the book's most valuable contributions is its discussion of the five machine learning "tribes": Symbolists, Connectionists, Evolutionaries, Bayesians, and Analogizers. Each tribe represents a different philosophy of learning, grouping together algorithms that share a common worldview. Before reading this book, I had been so focused on how to implement individual algorithms that I had not fully appreciated why these algorithms exist or how they relate to one another. Domingos' framework helped me see machine learning as an ecosystem rather than a collection of isolated techniques.

Symbolists emphasize the inference of explicit rules, making decision trees and logic-based systems their natural tools. Connectionists draw inspiration from the human brain, placing neural networks at the center of their approach. Evolutionaries look to biology in a different way, borrowing ideas from natural selection to create genetic algorithms. Bayesians ground learning in probability theory, treating uncertainty as a first-class citizen through Bayesian inference. Finally, Analogizers focus on learning by similarity, encompassing methods such as instance-based learning, kernel machines, and several forms of unsupervised and reinforcement learning.

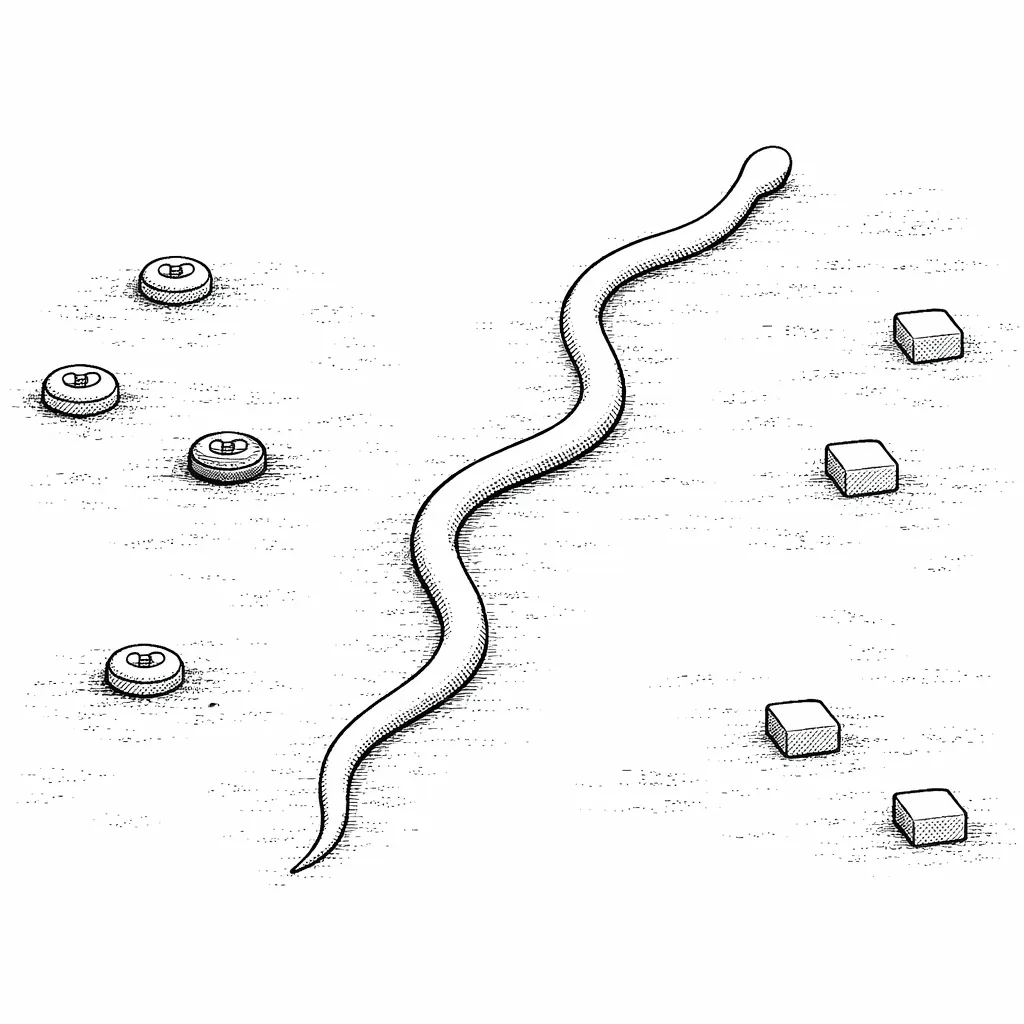

In Domingos’ taxonomy, Support Vector Machines (SVMs) belong to the Analogizers tribe, because they learn by geometry and similarity — drawing boundaries based not on rules or probabilities, but on distances to the closest examples. He explained this machine learning method, aptly, by using an analogy of a snake moving carefully through a "No Man's Land" separating two minefields (which can be thought of as the "classes" being separated by a dividing line).

In Domingos’ taxonomy, Support Vector Machines (SVMs) belong to the Analogizers tribe, because they learn by geometry and similarity — drawing boundaries based not on rules or probabilities, but on distances to the closest examples. He explained this machine learning method, aptly, by using an analogy of a snake moving carefully through a "No Man's Land" separating two minefields (which can be thought of as the "classes" being separated by a dividing line).

At first glance, these approaches appear radically different. However, Domingos convincingly argues that all machine learning algorithms share the same fundamental components: representation, optimization, and evaluation. What differs is not the essence of learning itself, but the form these components take in each tribe. This unifying perspective is particularly valuable for beginners, as it reduces the cognitive overload that often comes with encountering many algorithms at once.

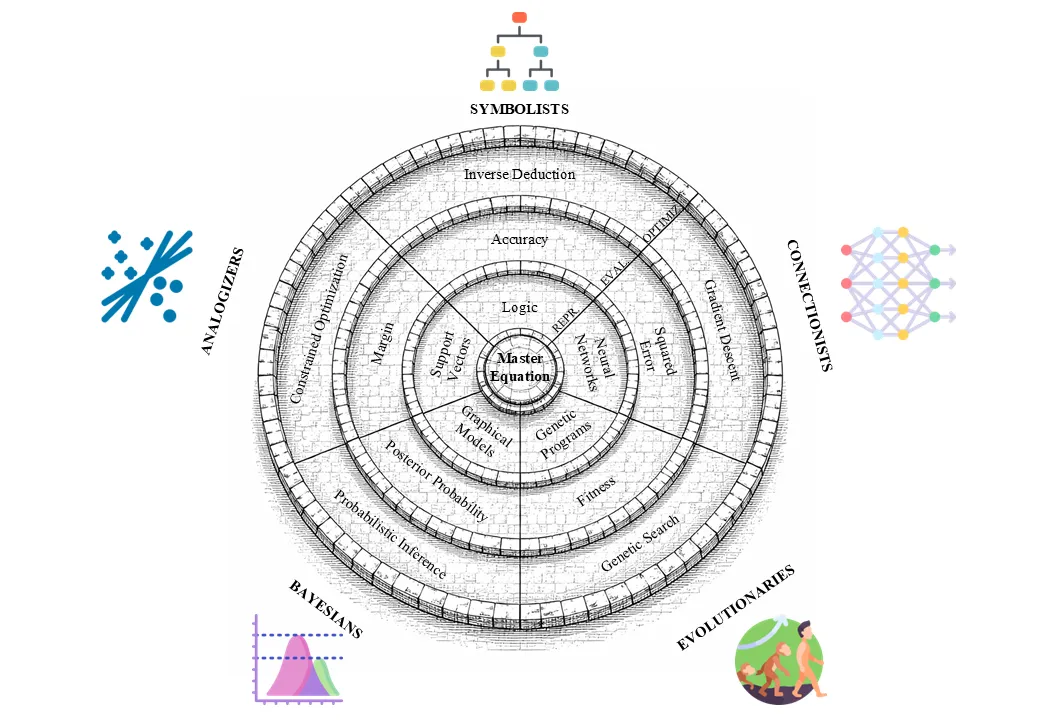

Towards the end of the book, Domingos has synthesized his idea that all machine learning tribes are just pieces of a single "master algorithm." All tribes have their own representation, optimization, and evaluation methods. He pictured this with a castle consisting of concentric castle walls: the outermost circle corresponds to optimization methods, the middle circle corresponds to evaluation methods, and the innermost circle corresponds to representation methods. Note that, at the center of the castle lies the "Master Equation." (This figure is creatively-enhanced with the help of ChatGPT 5.2. Original figure is credited to the author.)

Towards the end of the book, Domingos has synthesized his idea that all machine learning tribes are just pieces of a single "master algorithm." All tribes have their own representation, optimization, and evaluation methods. He pictured this with a castle consisting of concentric castle walls: the outermost circle corresponds to optimization methods, the middle circle corresponds to evaluation methods, and the innermost circle corresponds to representation methods. Note that, at the center of the castle lies the "Master Equation." (This figure is creatively-enhanced with the help of ChatGPT 5.2. Original figure is credited to the author.)

From this foundation emerges the book's central thesis: the search for a "Master Algorithm" — a single learning framework capable of integrating the strengths of all five tribes (hence, the book's name). Domingos argues that such an algorithm would not merely be an academic achievement, but a transformative force capable of reshaping science, medicine, economics, and society at large. This idea resonated strongly with me, especially when compared to the long-standing quest for unification in physics, such as the pursuit of a Grand Unified Theory. Yet, Domingos makes it clear that the ambition here is arguably even greater: a Master Algorithm would be a machine capable of discovering knowledge across any domain.

To support the plausibility of this vision, the author draws parallels from multiple disciplines — physics, computer science, and biology — each of which has historically progressed toward unifying principles. This interdisciplinary justification strengthens the book's argument and prevents it from sounding like mere speculation.

In the final chapters, Domingos proposes a candidate direction for a Master Algorithm based on his own research, while openly acknowledging that it remains incomplete. He then shifts his focus to the future, discussing how machine learning could revolutionize fields such as cancer research, while also addressing ethical concerns surrounding data privacy, algorithmic power, and autonomous warfare. His discussion of robotic warfare, in particular, stood out to me for its sobering realism and ethical nuance.

Is there truly a Master Algorithm? The book does not offer a definitive answer — and perhaps that is its greatest strength. Instead, it invites the reader to participate in the quest itself. Personally, this book made me want to be part of that journey. If truly intelligent machines are ever to exist, I believe that the search for a Master Algorithm will play a central role in making them possible. ###